the vulnerable world hypothesis

and what happens when we flip it

The first paper I read for this newsletter was Nick Bostrom’s “The Vulnerable World Hypothesis” paper (2019). The core idea first, then a thought experiment: what happens if we flip it?

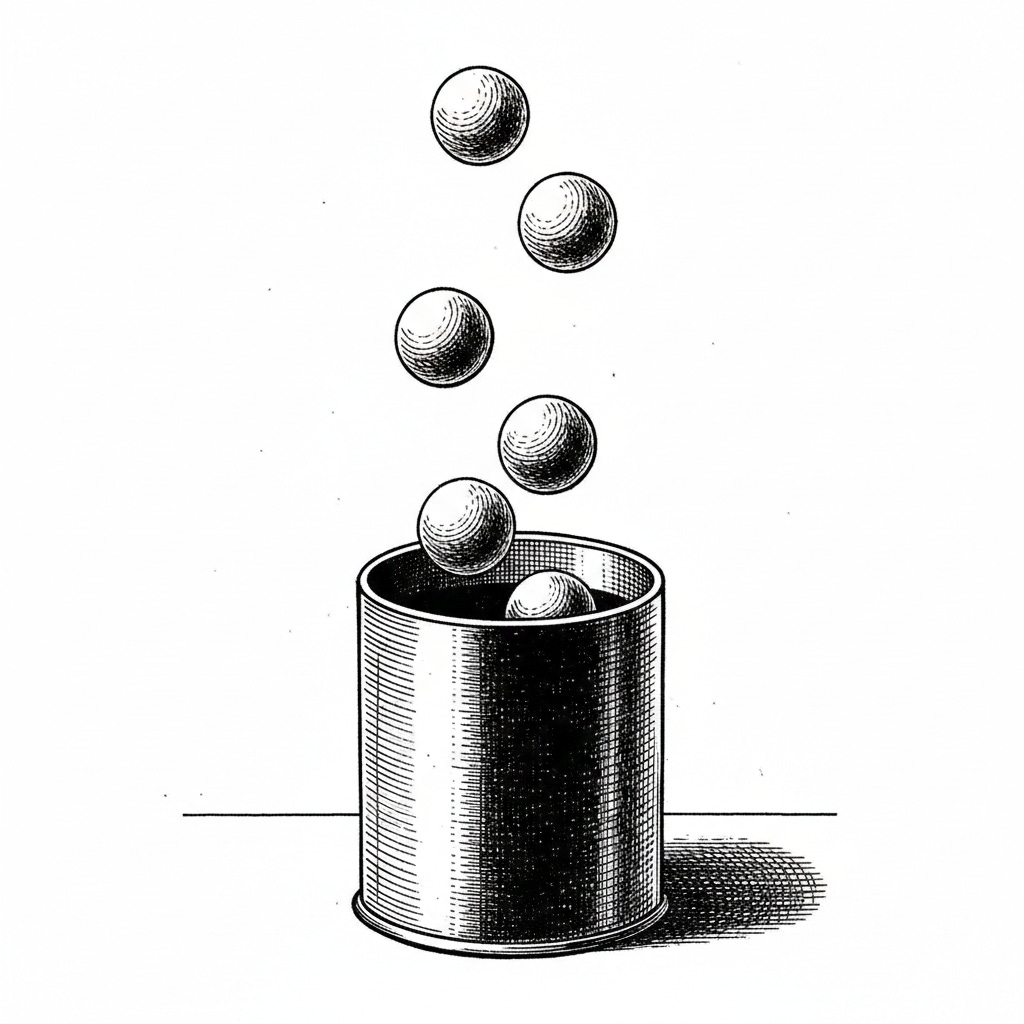

the urn metaphor

Bostrom asks you to imagine humanity pulling balls from an urn. Each ball represents a possible technological discovery or invention. We don’t know what’s in the urn before we pull; we just keep pulling throughout history.

Most balls are white: beneficial or at least manageable. Agriculture, antibiotics, the internet. Some are gray: nuclear weapons, fossil fuels, powerful but dangerous, managed through regulation, deterrence, and luck.

Bostrom’s concerned about black balls: technologies that are both catastrophically destructive and easy to access. The combination is what makes them dangerous. Nuclear weapons are catastrophically destructive but hard to build; you need nation-state resources. Bioweapons are easier to create but most aren’t civilization-ending. A black ball would be something like a deadly pandemic pathogen anyone can synthesize in a garage.

His argument: we don’t know if the urn contains black balls, but if it does, we’ll eventually pull one. And once it’s discovered, you can’t un-discover it.

the easy nukes problem

His clearest example is “easy nukes”: a hypothetical where you could build a nuclear-level weapon from widely available materials using information anyone could access.

Right now, nuclear weapons are constrained by difficulty. Building them requires enriched fissile material, precision engineering, delivery systems, and nation-state-level resources. This difficulty is why only a handful of countries have them, and why we’ve avoided nuclear terrorism at scale.

Now imagine someone discovers you can create a nuclear explosion using common household materials and a design that fits on a single page.

In that world, deterrence doesn’t work. Defense doesn’t work. Preventing catastrophe would require what Bostrom calls “high-tech totalitarianism”: pervasive surveillance of everyone, everywhere, all the time. Otherwise, you just have to get lucky forever. Every potential bad actor, everywhere in the world, has to choose not to use the technology, permanently.

That’s not a bet he thinks civilization can win.

why this matters for ai

Bostrom wrote this before the current AI boom, but it maps. AI is a capability amplifier. It makes things easier, both beneficial and harmful.

The question isn’t whether AI itself is a black ball. It’s whether AI might help pull black balls from the urn by making dangerous technologies easier to discover or implement.

AI good at biology research could accelerate drug discovery and make it easier to engineer dangerous pathogens. AI good at chemistry could help develop new materials and help design more accessible weapons. Bostrom’s framework clarifies what makes certain dual-use technologies especially concerning: when the harmful use is both catastrophic and easy.

a thought experiment: easy positive things

Bostrom focuses on technologies that are destructive and easy. What about technologies that are beneficial and easy?

Same framework: imagine pulling a ball from the urn that represents a capability that’s clearly beneficial and trivially easy to access. Sounds unambiguously good. More beneficial capabilities, more widely distributed.

The picture gets more complicated at scale.

when everyone can do the thing

What if everyone could code?

With AI assistance, we’re getting close. Someone with no programming background can build functional applications using AI tools. The barrier to creating software has dropped dramatically. English became the most popular programming language.

On an individual level this is clearly good. More people can build things. Creativity is less constrained by technical skills and ideas become reality faster.

At scale, it’s different. Most of my code is now written by AI. That means one engineer can do work that used to take a team. Companies are hiring fewer engineers, especially fewer juniors and interns. The job market is cold.

Nowadays I mostly set direction and review AI: designs, plans, code changes. If AI can write code from a single prompt that doesn’t need review (and eventually, it will), what happens to the profession?

“Everyone can do it” doesn’t mean “everyone gets hired to do it.” It might mean the opposite.

Take a higher-stakes example: medicine. If diagnosis became trivially accurate and widely accessible — anyone scans at home, gets expert-level results instantly — the individual benefits are clear: earlier detection, better outcomes, lower costs. The system-level effects are less obvious. What happens to healthcare infrastructure when continuous self-diagnosis becomes normal? What happens to medical expertise when the easy cases stop reaching doctors?

a concrete case: frictionless hiring

Let me make this more concrete with recruitment AI, since that’s what I actually work on.

Traditional hiring had natural friction: humans screening resumes, conducting phone screens, scheduling interviews, sending follow-ups. A company could only process so many candidates through their funnel.

That friction created constraints. You couldn’t recruit for every role simultaneously at full intensity. You had to prioritize which roles mattered most. The difficulty of hiring meant you hired deliberately. Candidate volume was naturally limited by what humans could process.

AI removes most of that friction. Screen thousands of candidates automatically, conduct initial conversations at scale, coordinate schedules instantly, maintain perfect follow-up. The system runs at whatever intensity you want.

For individual companies: faster hiring, lower costs, better candidate experience, more consistent evaluation.

But what happens when every company has this capability?

Candidates face easier applications everywhere, but more applications per role, more competition, faster-moving processes. The bar keeps rising because everyone’s tools keep improving.

Companies face easier recruiting, but harder differentiation, shorter retention, pressure to always be hiring because competitors are.

What got lost? The forcing function that made hiring deliberate rather than a continuous background process. The natural rate limiting that prevented constant churn. The friction that made “are we sure we need this person?” a question you had to answer.

What got amplified? The speed of hiring cycles. The volume of applications. The efficiency of matching, but also the efficiency of churning through candidates and roles.

“Frictionless” isn’t the same as “better” at a systemic level. Each company optimizing its own hiring makes sense locally, but what does the equilibrium look like when everyone does it?

Bostrom was writing about existential risk. The frictionless hiring problem isn’t that. But the underlying structure is the same: a capability that optimizes locally while shifting the equilibrium in ways individual participants can’t see from inside the system. Sometimes the friction was load-bearing. You don’t realize it until it’s gone.

AI removes that filter. That makes one question harder to ignore: what should we be building? That’s alignment too, not just at the model level.

💡